Introduction

I'm Sasaki from the Global Engineering Department of the GLB Division. I wrote an article summarizing the contents of the session based on the report by Mr. Nagasato, who is participating in Data + AI SUMMIT 2023 (DAIS) on site.

Articles about the session at DAIS are summarized on the special site below.

https://www.ap-com.co.jp/data_ai_summit-2023/

This time, I would like to summarize the lecture I recently watched, "Advancements in Open Source LLM Tooling, Including MLflow" in an easy-to-understand manner. This talk focused on MLflow, an open source machine learning platform, on the evolution of machine learning training and how to solve contextual problems in LLM applications. The intended target audience is engineers interested in machine learning, engineers involved in machine learning training and deployment, and developers of LLM applications.

The evolution of machine learning training and the importance of contextual search

In recent years, the definition of machine learning training has changed, and contextual search has become important. This is because machine learning applications have become more complex, dealing with diverse data sources and algorithms. To accommodate this change, MLflow, an open source machine learning platform, was developed.

MLflow Overview and Features

MLflow is an open-source platform that provides capabilities such as machine learning experiment management and model packaging and deployment. Specifically, it has the following functions.

- Experiment management: A function that allows you to centrally manage machine learning experiments and compare and analyze results.

- Model packaging: The ability to package machine learning models in a reusable format.

- Model Deployment: Ability to deploy the packaged model to production.

These features streamline the machine learning development process, helping you develop high-quality applications faster.

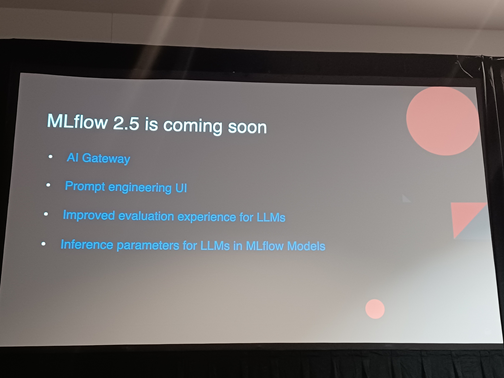

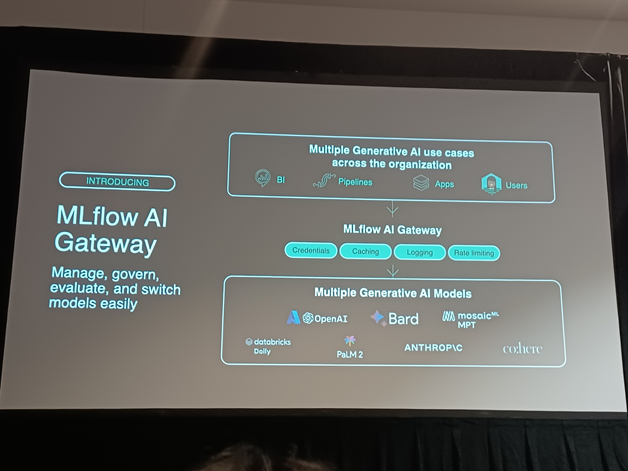

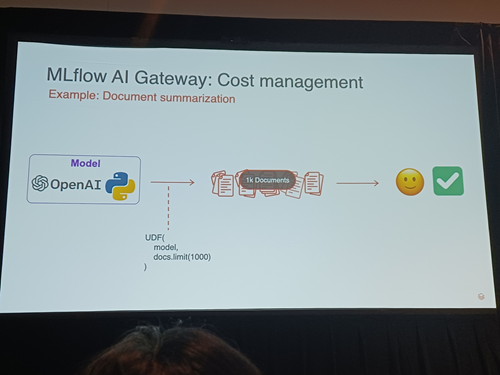

Latest concepts and features

MLflow is constantly incorporating the latest concepts and features. For example, recently added features include:

- Automated Machine Learning (AutoML): A feature that automates machine learning model selection and hyperparameter optimization.

- Model versioning: A feature that enables model versioning, comparison with past versions, and rollback.

These features further streamline the machine learning development process, helping you develop high-quality applications faster.

Data features and contextual issues in LLM applications

The LLM application emphasized that data is unstructured and that context matters. Unstructured data refers to unstructured data such as text, images, and audio. These data are said to be more difficult to extract and analyze than structured data (numerical, categorical, etc.). The characteristics of unstructured data handled by LLM applications are as follows.

- Large amount of data

- Diverse data formats

- Inconsistent data quality

These characteristics show that data context is very important in LLM applications.

You can tailor the output to your domain by providing additional context.

The talk explained that by providing additional context, the output can be tailored to the domain. Specifically, the following methods were mentioned.

The talk explained that by providing additional context, the output can be tailored to the domain. Specifically, the following methods were mentioned.

- Leverage domain-specific knowledge

- Perform data preprocessing and feature engineering

- Adjust model architecture and hyperparameters

Using these methods will allow your LLM application to generate better output.

Summary

In this talk, he focused on MLflow, an open source machine learning platform, and explained the evolution of machine learning training and how to solve contextual problems in LLM applications. By utilizing these findings, the development and operation of machine learning can be made more efficient, and higher quality applications can be realized. I would like to continue to pay attention to the evolution of open source machine learning platforms such as MLflow.

## Conclusion This content based on reports from members on site participating in DAIS sessions. During the DAIS period, articles related to the sessions will be posted on the special site below, so please take a look.

Translated by Johann

Thank you for your continued support!