Introduction

This is May from the GLB Division Lakehouse Department.

Based on reports from members participating in the local Data + AI SUMMIT 2023 (DAIS), Share "Unlock the Next Evolution of the Modern Data Stack With the Lakehouse Revolution". This session, featuring Databricks' Allison Baker, Roberto Salcido, Kyle Hale, and Franco Pitano, showcases the next evolution of the modern data stack through the Lakehouse revolution and helps data analysts and data scientists unlock the value of their data. It is intended to provide information. Our target audience is data analysts, data scientists, data engineers, and data architects.

This blog consists of two parts, and this time we will deliver the second part. Part 1 introduced the Lakehouse revolution bringing the evolution of the modern data stack as well as the latest concepts, features and services. In Part 2, we will explain how to integrate and transform data using Fivetran and DBT Cloud, and how to improve the performance of data modeling using DBT Cloud and data visualization using Tableau.

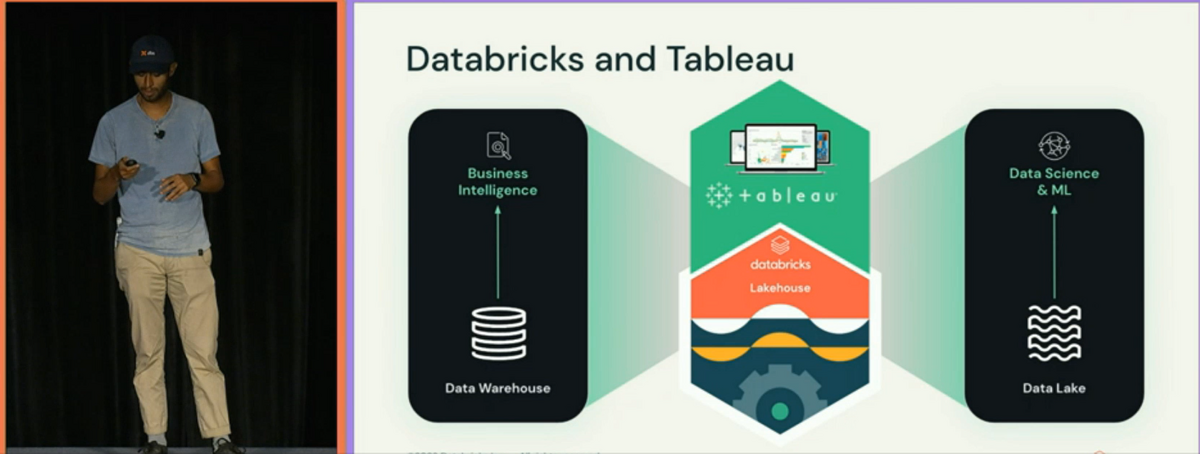

Data integration using Fivetran

First, he showed how to use Fivetran to connect to data sources and ingest data into a SQL warehouse. Fivetran is a data integration platform that allows you to easily ingest data from various data sources. Below is a summary of the steps to perform data integration using Fivetran.

- Create a Fivetran account

- Select data source

- Connection setting with data source

- Data Ingestion into SQL Warehouse

This allows data analysts and data scientists to easily access and integrate data sources.

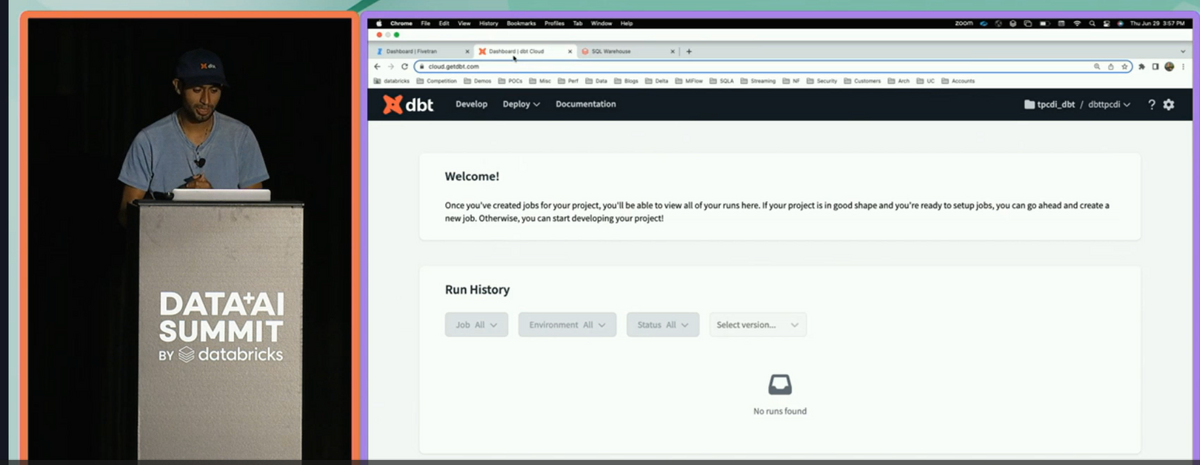

Data transformation and cleansing with DBT Cloud

It then explained how to use DBT Cloud to transform and cleanse data and implement the TPC-DI framework. DBT Cloud is a platform for data transformation and cleansing that can improve data quality. Below is a summary of the steps for data transformation and cleansing using DBT Cloud.

- Create a DBT Cloud account

- Project settings

- Implementation of data conversion and cleansing

- Application of TPC-DI Framework

- Check data quality

This allows data analysts and data scientists to improve data quality and perform more accurate analyses.

About the latest concepts, features and services

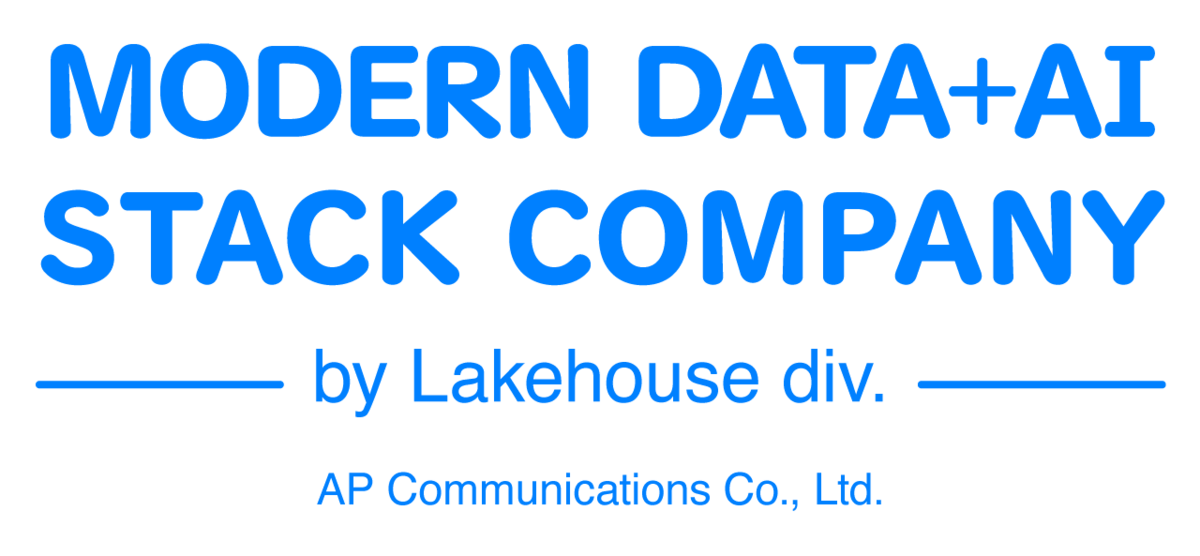

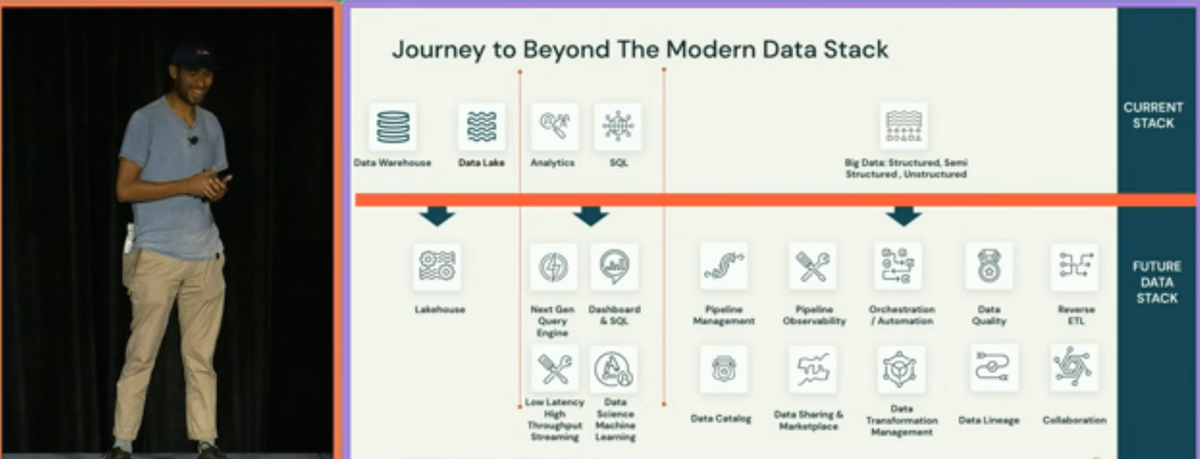

This talk showcased the next evolution of the modern data stack through the Lakehouse revolution. The Lakehouse revolution is a new data architecture that combines the capabilities of data lakes and data warehouses. This allows data analysts and data scientists to unlock the full value of their data.

They also touched on the latest features and services. For example, the range of data utilization is expanding, such as real-time data analysis and data analysis using machine learning. By utilizing these functions and services, data analysts and data scientists can perform more effective data analysis.

Summary

In this talk, the methods of data integration and transformation using Fivetran and DBT Cloud were introduced. We also showcased the next evolution of the modern data stack through the Lakehouse revolution. Data analysts and data scientists can use this information to maximize the value of their data. The latest concepts, functions, and services were also touched upon, and I learned that the range of data utilization is expanding. We would like to continue to pay attention to the evolution of such technology and provide information that is easy to understand for Japanese readers.

Conclusion

This content based on reports from members on site participating in DAIS sessions. During the DAIS period, articles related to the sessions will be posted on the special site below, so please take a look.

Translated by Johann

Thank you for your continued support!