Introduction

This is May from the GLB Division Lakehouse Department.

Based on reports from members participating in the local Data + AI SUMMIT 2023 (DAIS), Share "LLMOps: Everything You Need to Know to Manage LLMs". This session was conducted by Eric Peter, Product Manager at Databricks. The theme of the talk is to provide the necessary knowledge to bring LLMs (Large Language Models) into production. The target audience is techies interested in data & AI, and anyone interested in putting LLMs into production.

Now, I will introduce the knowledge gained in the lecture by dividing it into the following topics.

- Learn about the importance of inference tables and model serving

- AI model curation and optimization

- The Importance of Conversational Interfaces

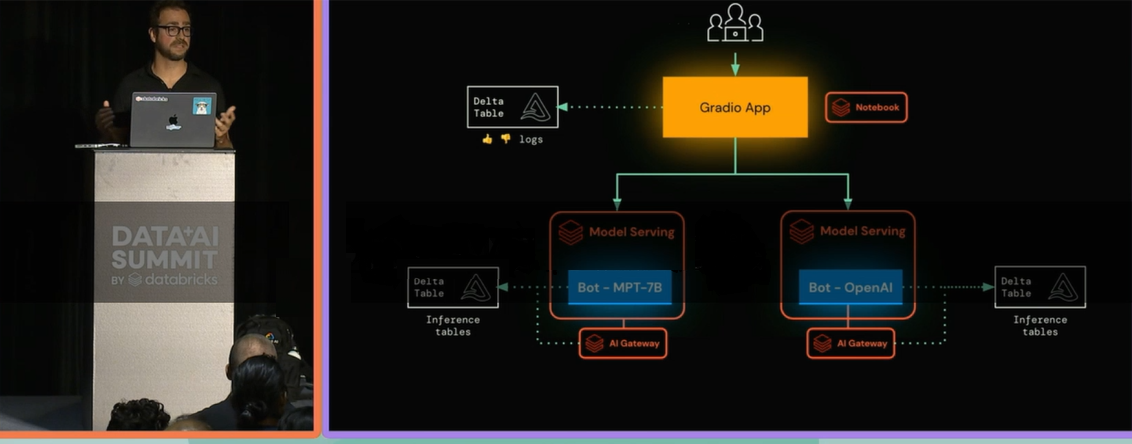

1. Learn about the importance of inference tables and model serving

First, let's learn about Inference Tables and Model Serving, which are key elements in putting LLMs into production as proposed by Databricks. An inference table is a table that stores model inference results and uses them for model serving and monitoring. Below is a bulleted list of why inference tables are important.

- Efficient management of model inference results

- Easier to monitor model performance

- Easier to improve and debug models

For these reasons, the creation of inference tables has become an important part of running models in production environments.

2. AI model curation and optimization

Next, learn about the importance of understanding curated lists of AI models that have been applied and optimized for specific tasks. He also touched on how to use AutoML to create scalable, production-ready vector indexes.

AI model curation is the process of selecting the right model for a particular task and maximizing its performance. The following points were emphasized in the lecture.

- Understand the characteristics of the task: It is important to understand the purpose of the task, the type of data, the performance requirements of the model, etc.

- Choose the right model: Choosing the right model for the task is an efficient way to achieve high performance.

- Model optimization: You can further improve performance by optimizing the selected model.

3. The Importance of Conversational Interfaces

Finally, learn how creating conversational interfaces is important to many customers, leading to reduced costs, increased quality, and improved efficiency. Conversational interfaces are technologies that allow humans and computers to communicate in natural language, such as chatbots and voice assistants.

The advantages of a conversational interface are:

- Cost savings: Replacing human operators with chatbots can reduce labor costs.

- Quality improvement: As AI learns, it will be able to give better answers and responses.

- Improved efficiency: We are available 24 hours a day, 365 days a year and can respond quickly to customer inquiries.

The talk also showed how to use MLflow to evaluate chatbot prompts and select the best one. MLflow is an open source platform that helps manage machine learning experiments and deploy models.

Summary

Through this talk, I was able to learn the necessary knowledge to put LLMs into production. By using functions such as inference table creation, vector search, and feature serving, it is expected that model operation in a production environment will become more efficient and effective. I also learned about the importance of conversational interfaces and how to evaluate chatbots using MLflow. In the future, I would like to continue to provide articles that are easy to understand for Japanese readers while incorporating the latest knowledge.

Conclusion

This content based on reports from members on site participating in DAIS sessions. During the DAIS period, articles related to the sessions will be posted on the special site below, so please take a look.

Translated by Johann

Thank you for your continued support!